文章目錄

一、下載安裝IDEA

二、搭建本地hadoop環境(window10)

三、安裝Maven

四、新建項目和模塊

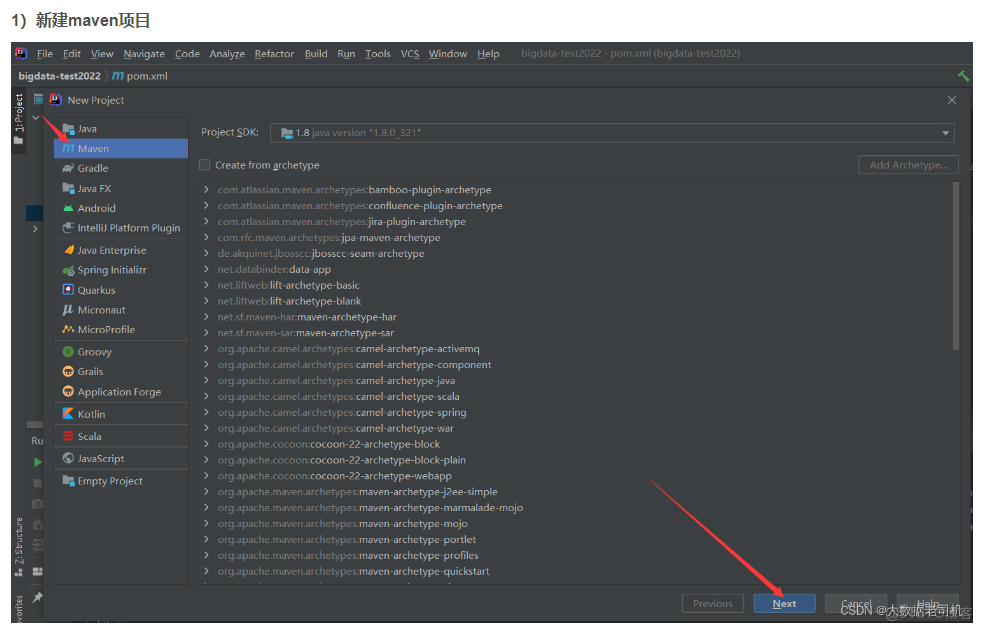

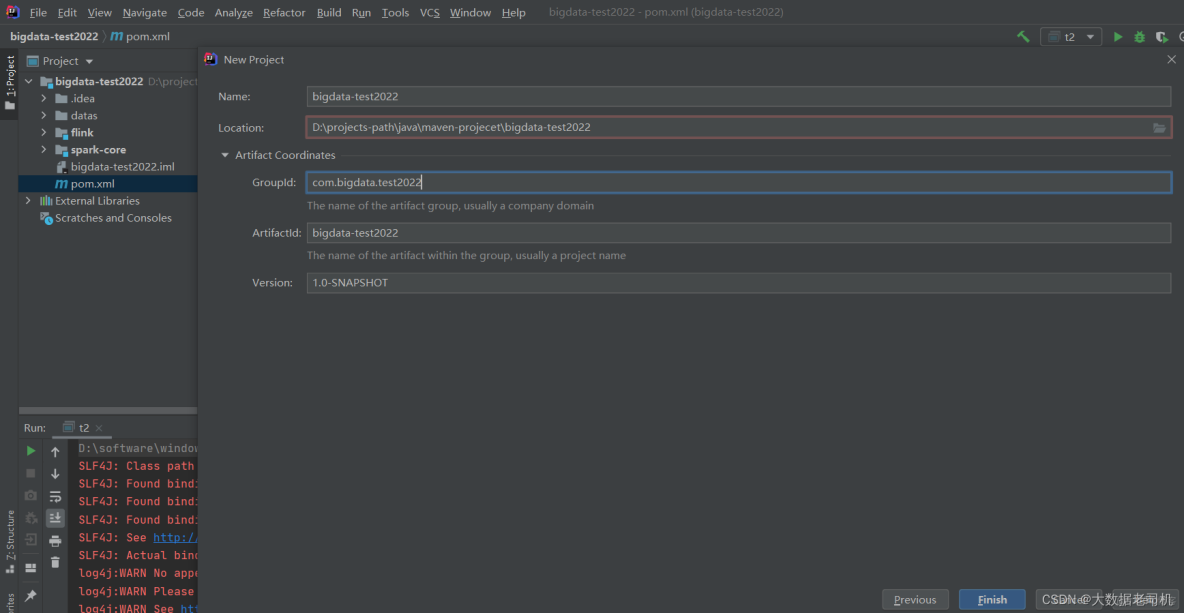

1)新建maven項目

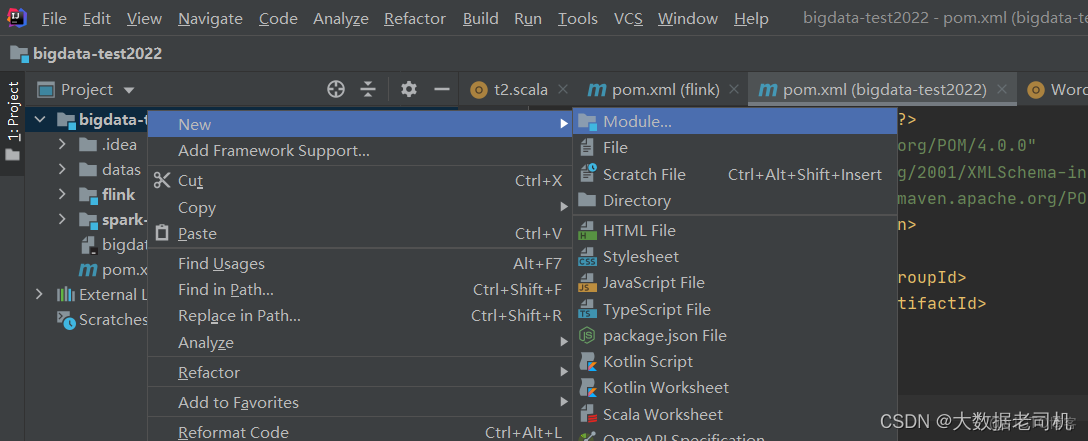

2)新建flink模塊

五、配置IDEA環境(scala)

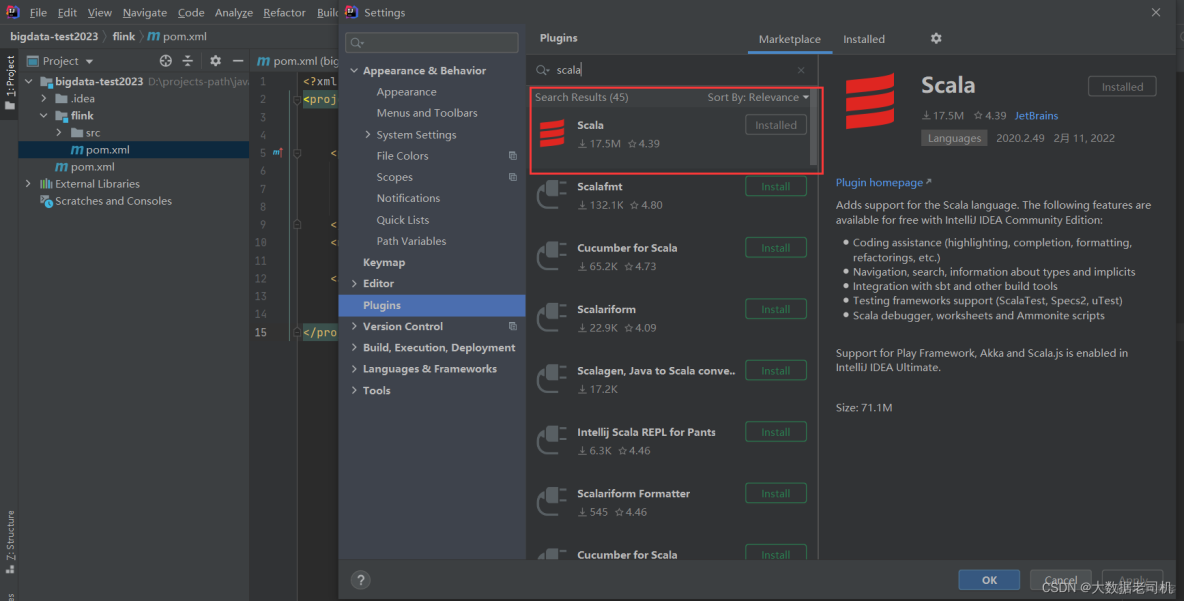

1)下載安裝scala插件

2)配置scala插件到模塊或者全局環境

3)創建scala項目

4)DataStream API配置

1、Maven配置

2、示例演示

5)Table API & SQL配置

1、Maven配置

2、示例演示

6)HiveCatalog

1、Maven配置

2、Hadoop與Hive Guava衝突問題

3、示例演示

7)下載flink並本地啓動集羣(window)

8)完成版配置

1、maven配置

2、log4j2.xml配置

3、hive-site.xml配置

六、配置IDEA環境(java)

1)maven配置

2)log4j2.xml配置

3)hive-site.xml配置

一、下載安裝IDEA

二、搭建本地hadoop環境(window10)

可以看我之前的文章:大數據Hadoop之——部署hadoop+hive環境(window10環境)

三、安裝Maven

可以看我之前的文章:Java-Maven詳解

四、新建項目和模塊

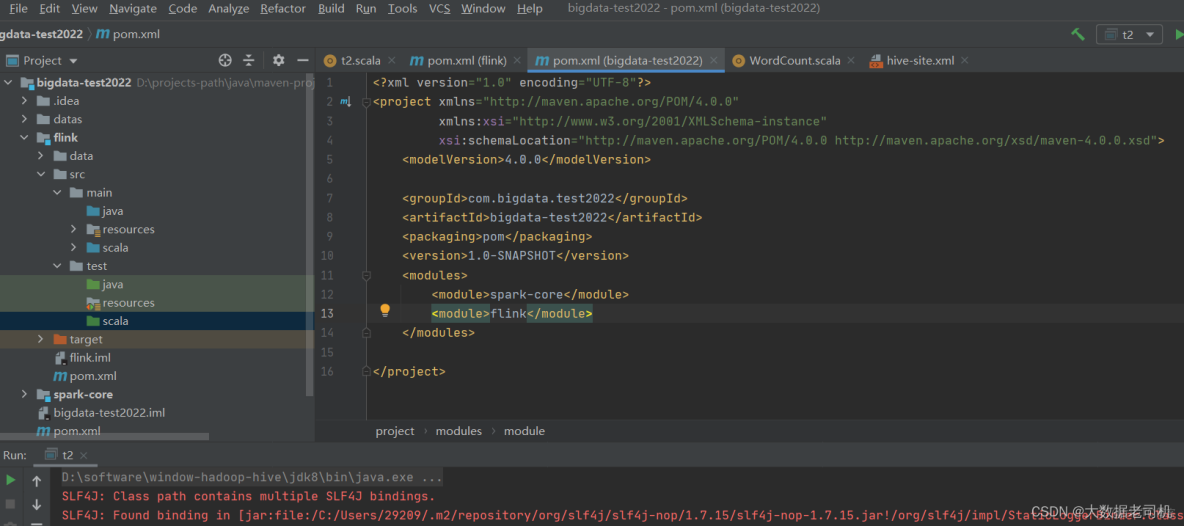

因為之前我創建過了,所以會標紅

把自動生成的src刪掉,以後是通過模塊來管理項目,因為一個項目一般會包含很多模塊。

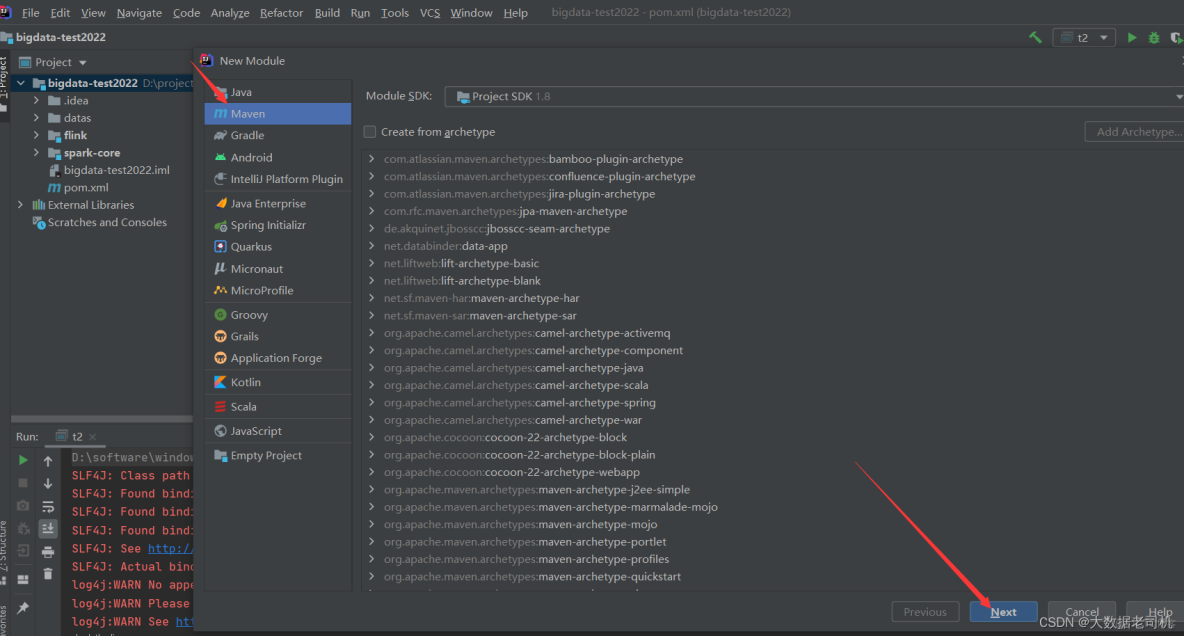

2)新建flink模塊

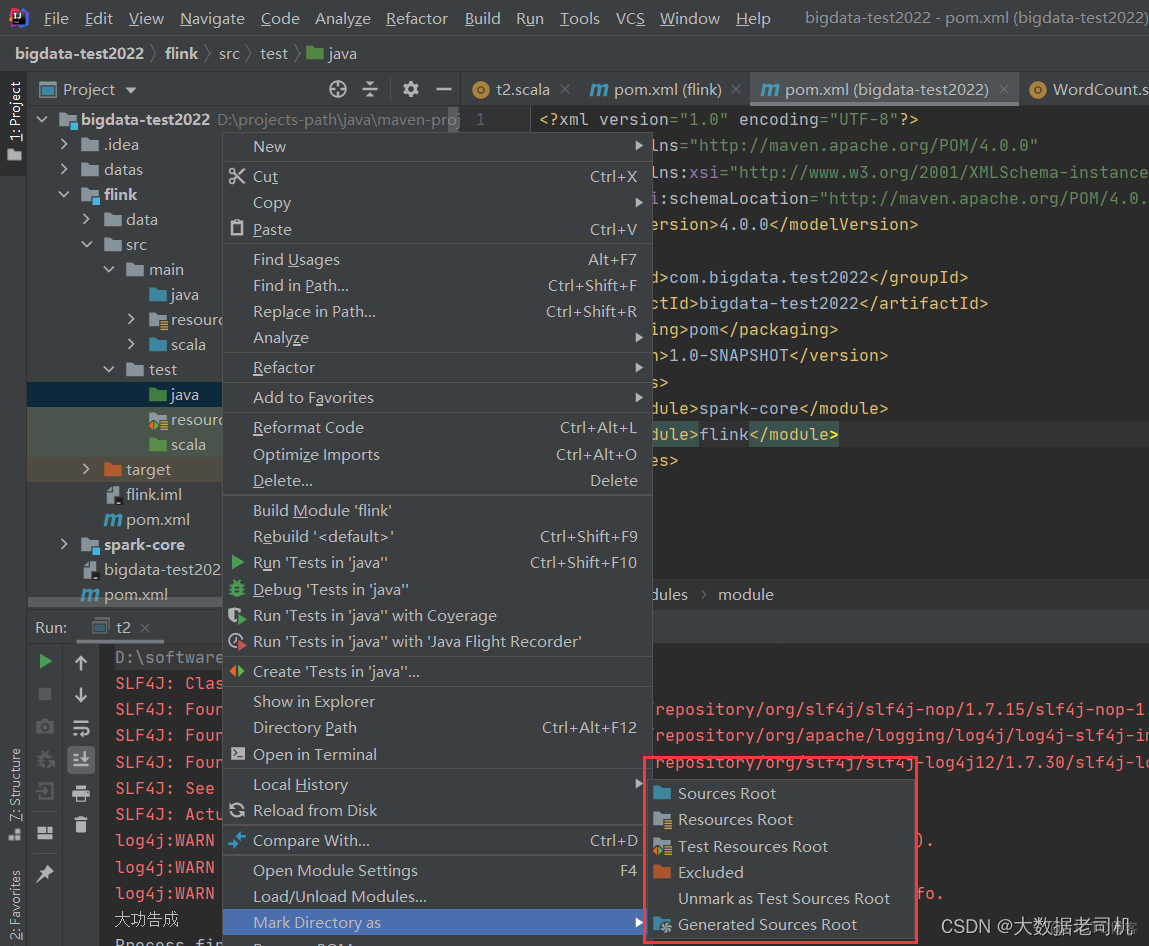

目錄結構,新建沒有的目錄

設置目錄屬性

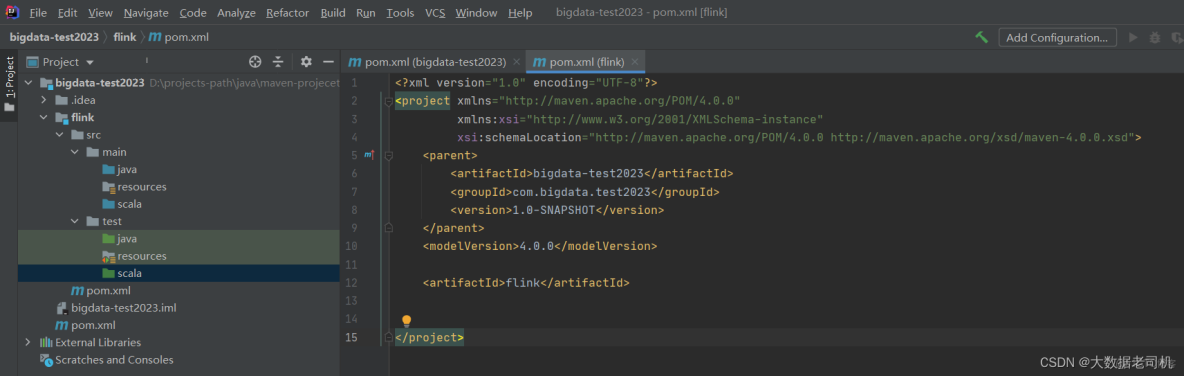

因為之前創建過項目,所以這裏創建一個新項目來演示:bigdata-test2023

五、配置IDEA環境(scala)

1)下載安裝scala插件

File-》Settings

intellij IDEA本來是不能開發Scala程序的,但是通過配置是可以的,我之前已經裝過了,沒裝過的小夥伴,點擊這裏安裝即可。

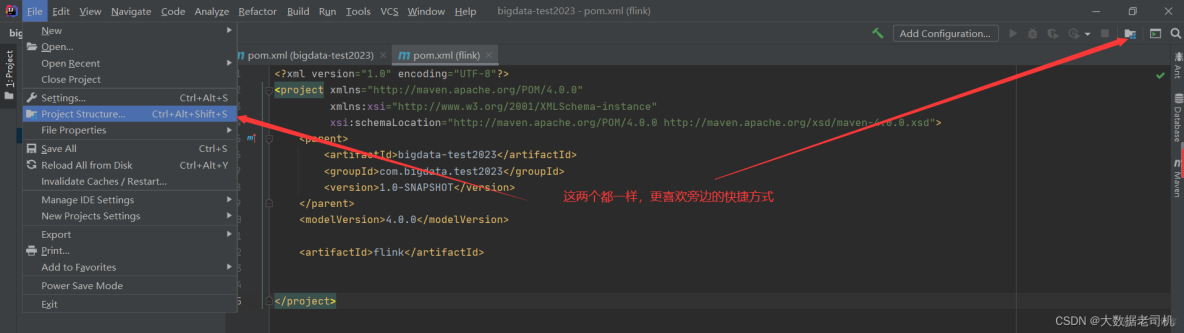

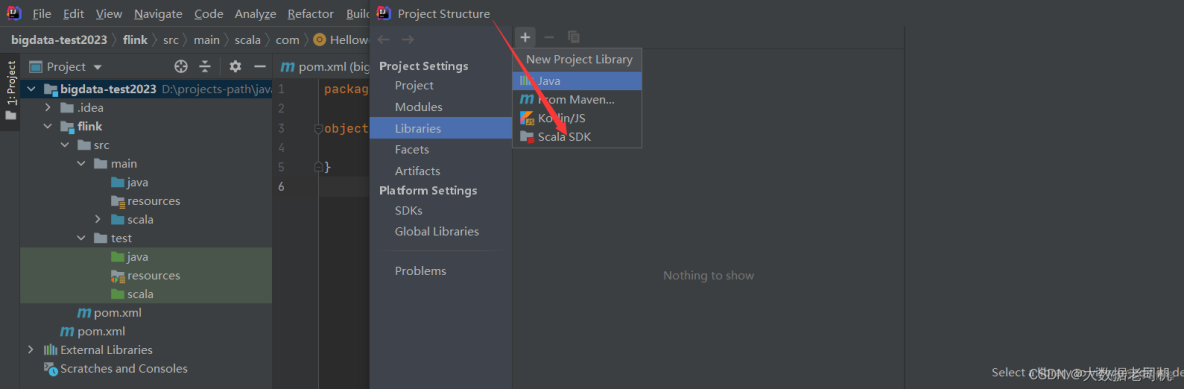

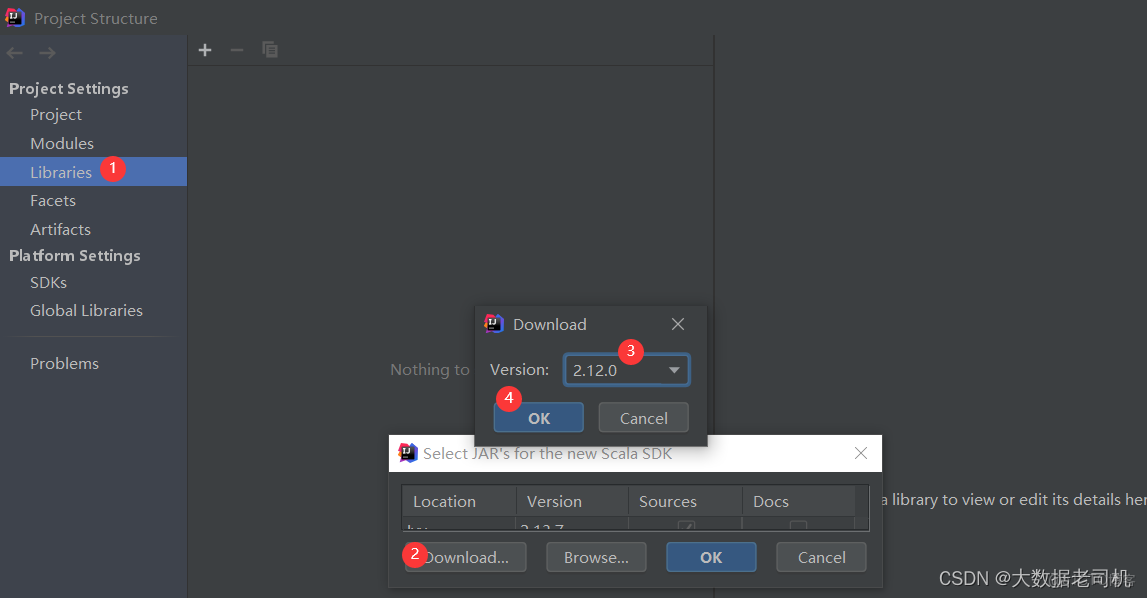

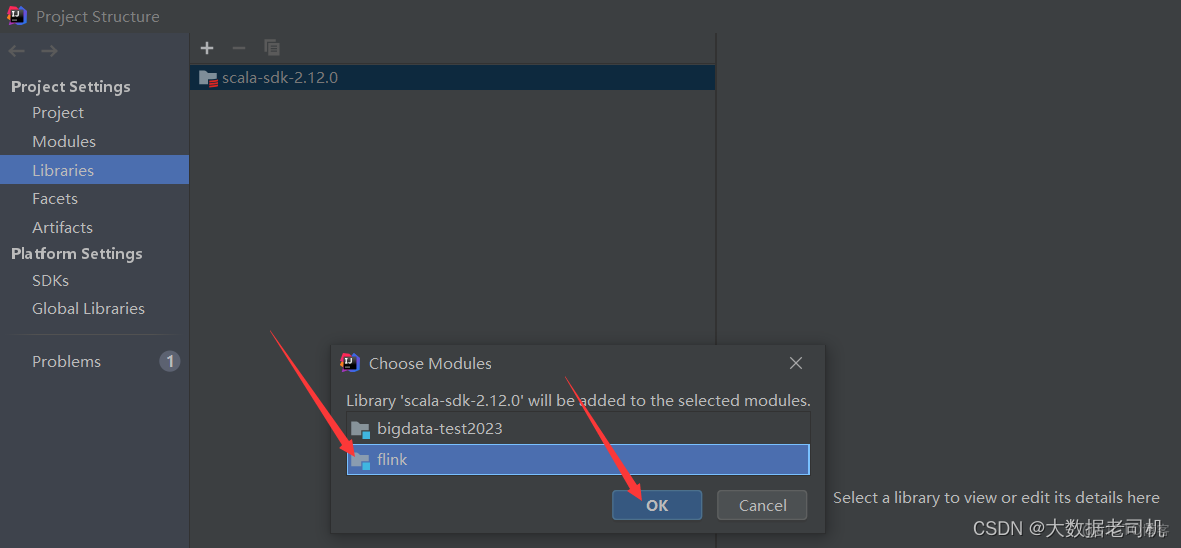

2)配置scala插件到模塊或者全局環境

添加完scala插件之後就可以創建scala項目了

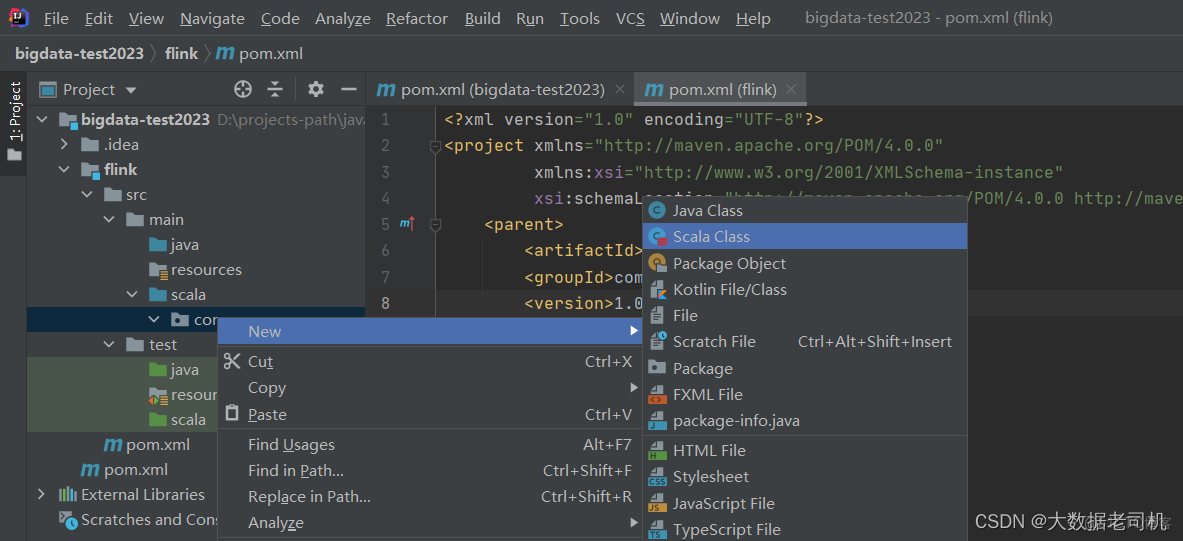

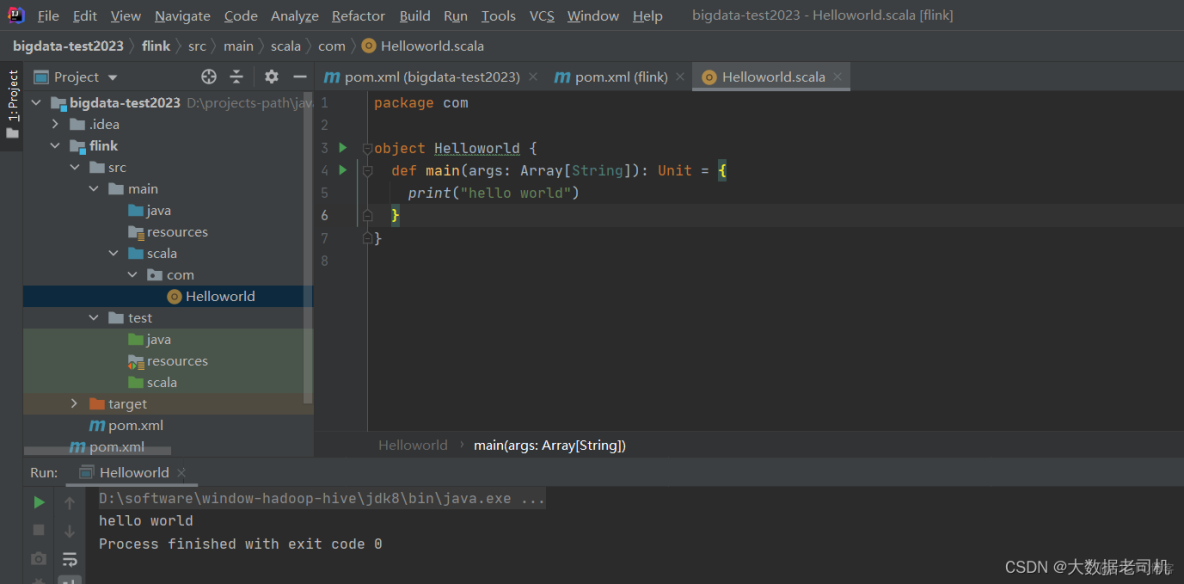

3)創建scala項目

創建Object類

【温馨提示】類只會被編譯,不能直接被執行。

【温馨提示】類只會被編譯,不能直接被執行。

4)DataStream API配置

1、Maven配置

在flink模塊目錄下pom.xml配置如下內容:

【温馨提示】這裏的scala版本要與上面插件版本一致

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-scala_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-streaming-scala -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

AI寫代碼

xml

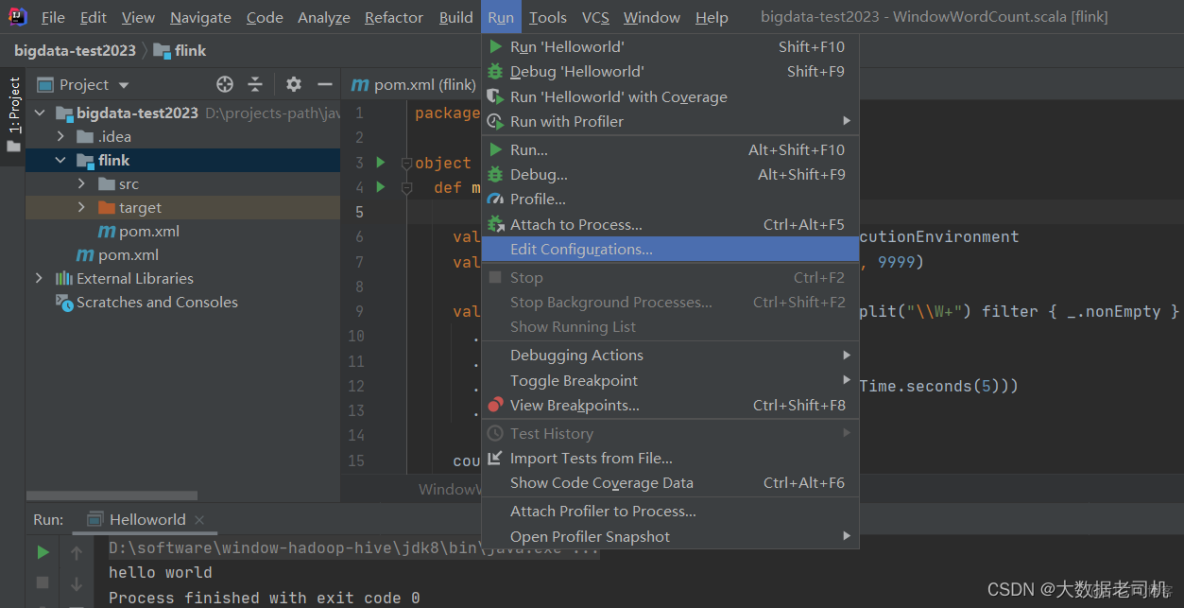

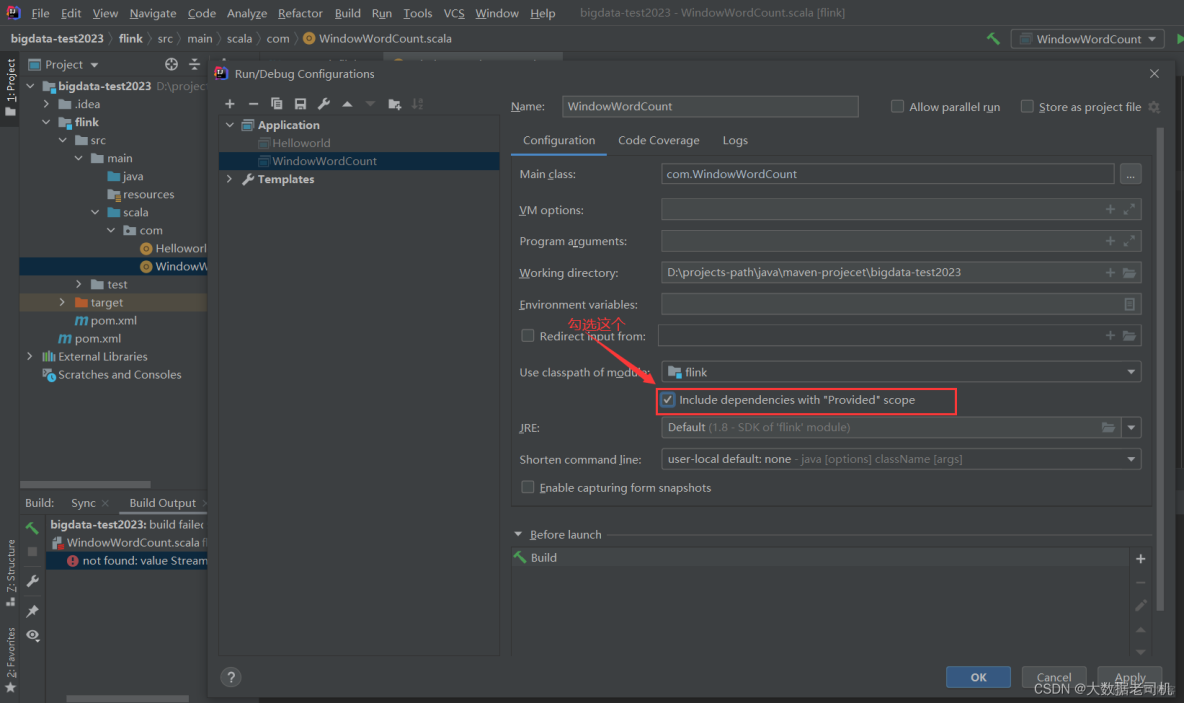

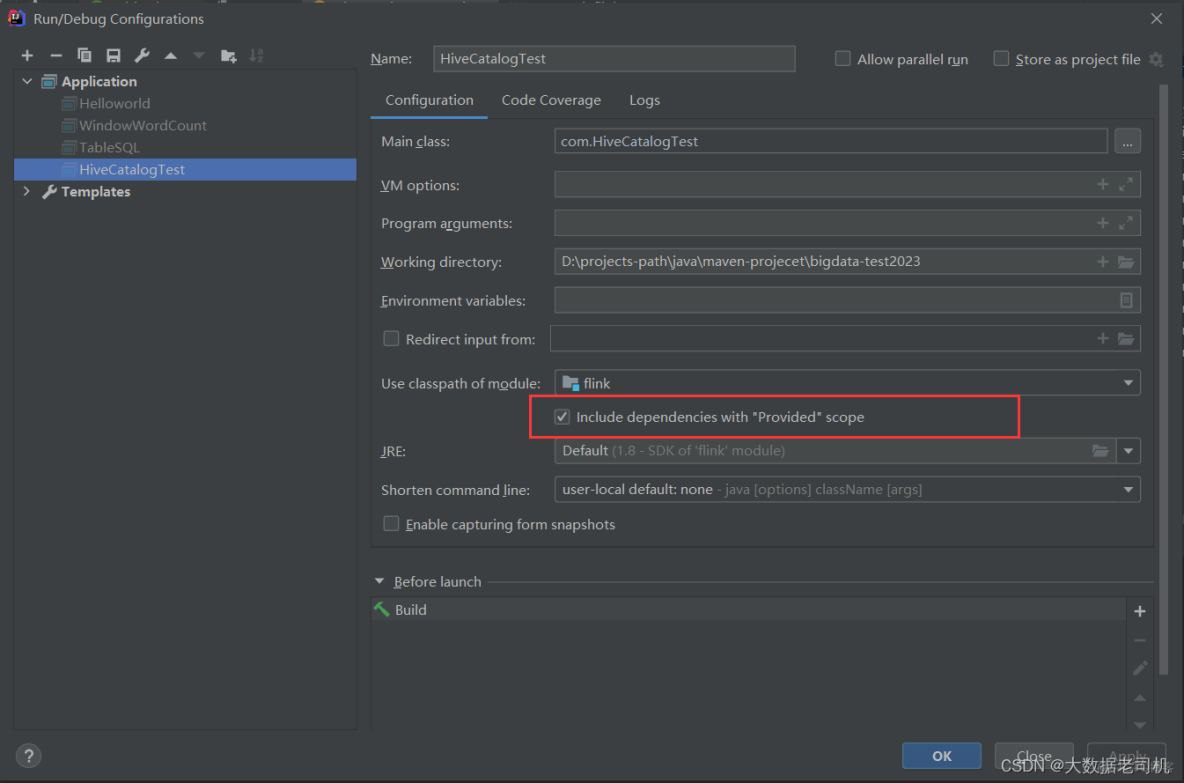

【問題】IDEA 在使用Maven項目時,未加載 provided 範圍的依賴包,導致啓動時報錯

【原因】就是 Run Application時,IDEA未加載 provided 範圍的依賴包,導致啓動時報錯,這是IDEA的bug

【解決】在IDEA中設置

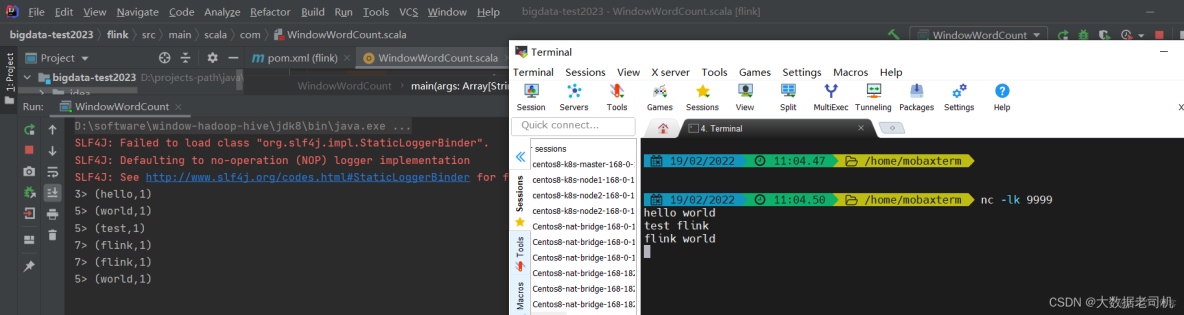

2、示例演示

(官網示例)

package com

import org.apache.flink.streaming.api.scala._

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows

import org.apache.flink.streaming.api.windowing.time.Time

object WindowWordCount {

def main(args: Array[String]) {

val env = StreamExecutionEnvironment.getExecutionEnvironment

val text = env.socketTextStream("localhost", 9999)

val counts = text.flatMap { _.toLowerCase.split("\\W+") filter { _.nonEmpty } }

.map { (_, 1) }

.keyBy(_._1)

.window(TumblingProcessingTimeWindows.of(Time.seconds(5)))

.sum(1)

counts.print()

env.execute("Window Stream WordCount")

}

}

AI寫代碼

java

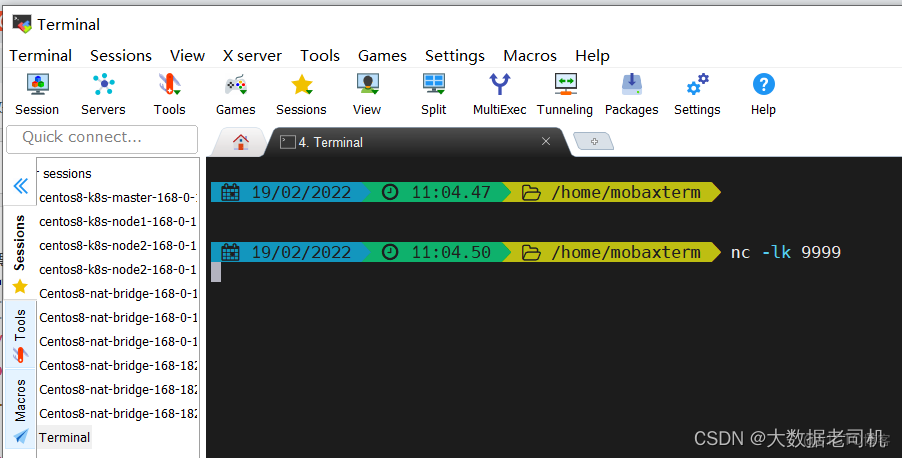

運行

在命令行起一個9999端口的服務

$ nc -lk 9999

AI寫代碼

bash

1

運行測試

5)Table API & SQL配置

1、Maven配置

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-common</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

AI寫代碼

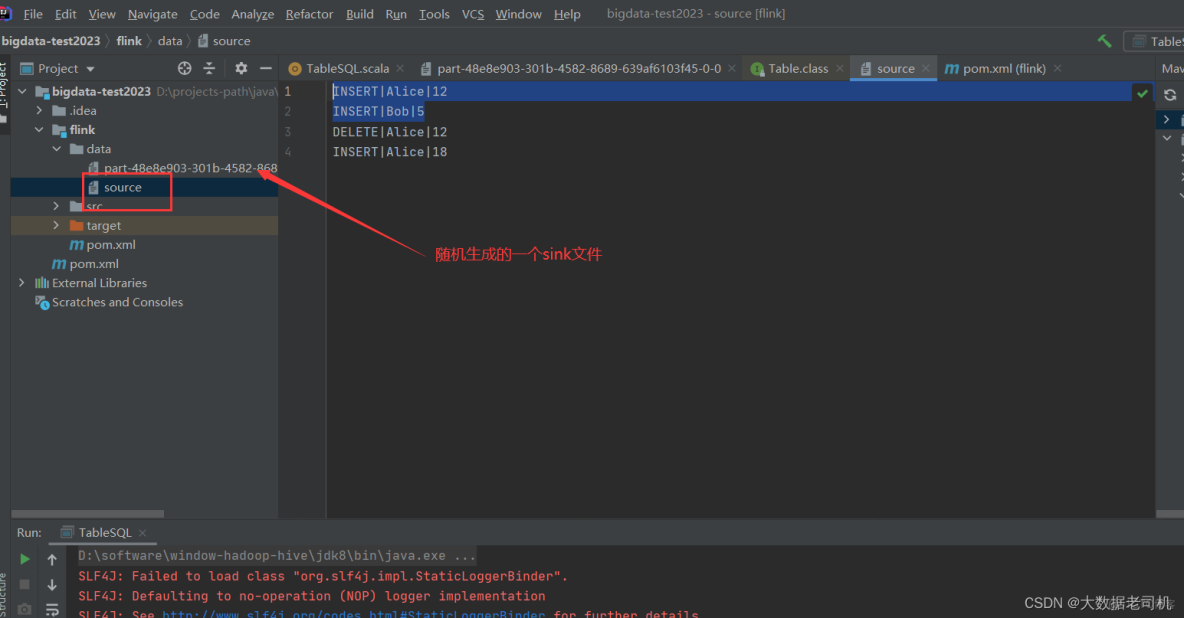

xml2、示例演示

這裏使用filesystem,不需要引用相應得maven配置,像kafka,ES等連接器是需要引入相應的maven配置,但是這裏使用到了format csv,所以得引入相應得配置,配置如下:

更多連接器的介紹,你看官方文檔

<!-- format csv 下面會用到-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-csv</artifactId>

<version>1.14.3</version>

</dependency>AI寫代碼

xml

源碼

package com

import org.apache.flink.table.api._

object TableSQL {

def main(args: Array[String]): Unit = {

val settings = EnvironmentSettings.inStreamingMode()

val tableEnv = TableEnvironment.create(settings)

// create an output Table

val schema = Schema.newBuilder()

.column("a", DataTypes.STRING())

.column("b", DataTypes.STRING())

.column("c", DataTypes.STRING())

.build()

tableEnv.createTemporaryTable("CsvSourceTable", TableDescriptor.forConnector("filesystem")

.schema(schema)

.option("path", "flink/data/source")

.format(FormatDescriptor.forFormat("csv")

.option("field-delimiter", "|")

.build())

.build())

tableEnv.createTemporaryTable("CsvSinkTable", TableDescriptor.forConnector("filesystem")

.schema(schema)

.option("path", "flink/data/")

.format(FormatDescriptor.forFormat("csv")

.option("field-delimiter", "|")

.build())

.build())

// 創建一個查詢語句

val sourceTable = tableEnv.sqlQuery("SELECT * FROM CsvSourceTable limit 2")

// 將查詢到的數據轉到下游存儲

sourceTable.executeInsert("CsvSinkTable")

}

}AI寫代碼bash

6)HiveCatalog

1、Maven配置

基礎配置

<!-- Flink Dependency -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hive_2.11</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_2.11</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<!-- Hive Dependency -->

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>3.1.2</version>

<scope>provided</scope>

</dependency>

AI寫代碼

xml【温馨提示】在IDEA中scope設置provided的時候,必須對應的運行文件設置加載provided的依賴到classpath

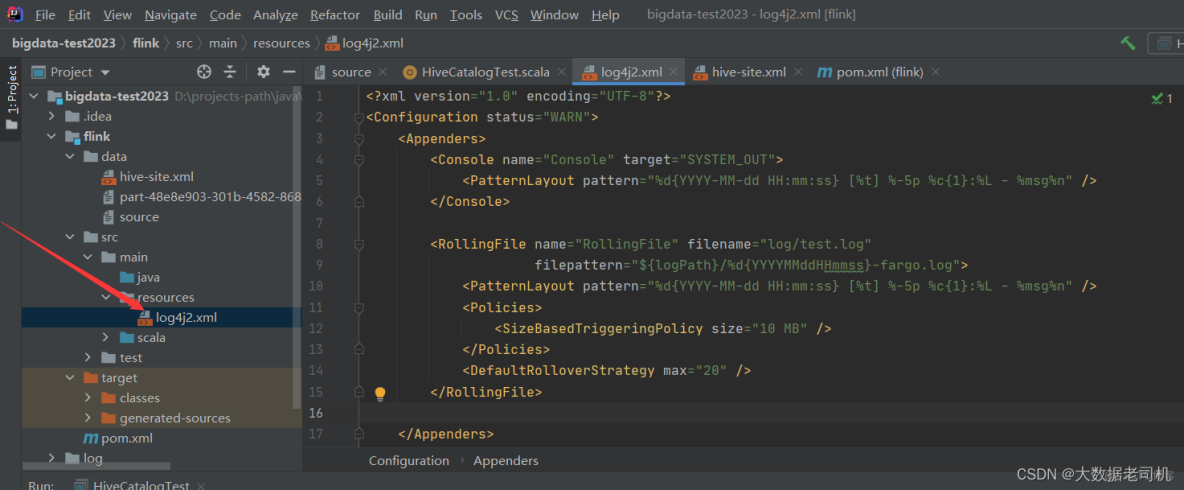

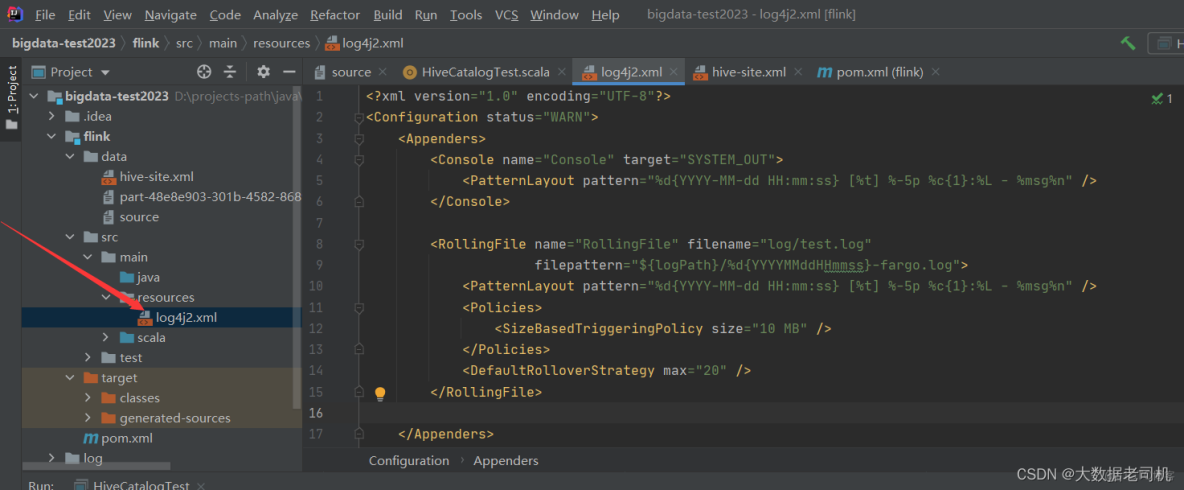

Log4j2 配置(log4j2.xml)

<?xml version="1.0" encoding="UTF-8"?>

<Configuration status="WARN">

<Appenders>

<Console name="Console" target="SYSTEM_OUT">

<PatternLayout pattern

="%d{YYYY-MM-dd HH:mm:ss} [%t] %-5p %c{1}:%L - %msg%n" />

</Console>

<RollingFile name="RollingFile" filename="log/test.log"

filepattern="${logPath}/%d{YYYYMMddHHmmss}-fargo.log">

<PatternLayout pattern="%d{YYYY-MM-dd HH:mm:ss} [%t] %-5p %c{1}:%L - %msg%n" />

<Policies>

<SizeBasedTriggeringPolicy size="10 MB" />

</Policies>

<DefaultRolloverStrategy max="20" />

</RollingFile>

</Appenders>

<Loggers>

<Root level="info">

<AppenderRef ref="Console" />

<AppenderRef ref="RollingFile" />

</Root>

</Loggers>

</Configuration>

配置hive-site.xml

配置hive-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- 所連接的 MySQL 數據庫的地址,hive_remote2是數據庫,程序會自動創建,自定義就行 -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&useSSL=false&serverTimezone=Asia/Shanghai</value>

</property>

<!-- MySQL 驅動 -->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>MySQL JDBC driver class</description>

</property>

<!-- mysql連接用户 -->

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>user name for connecting to mysql server</description>

</property>

<!-- mysql連接密碼 -->

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password for connecting to mysql server</description>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://localhost:9083</value>

<description>IP address (or fully-qualified domain name) and port of the metastore host</description>

</property>

<!-- host -->

<property>

<name>hive.server2.thrift.bind.host</name>

<value>localhost</value>

<description>Bind host on which to run the HiveServer2 Thrift service.</description>

</property>

<!-- hs2端口 默認是1000,為了區別,我這裏不使用默認端口-->

<property>

<name>hive.server2.thrift.port</name>

<value>10001</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>true</value>

</property>

</configuration>

AI寫代碼

xml【温馨提示】必須啓動metastore和hiveserver2服務,不清楚的小夥拍可以參考我之前的文章:大數據Hadoop之——部署hadoop+hive環境(window10環境)

$ hive --service metastore

$ hive --service hiveserver2

AI寫代碼

bash

1

22、Hadoop與Hive Guava衝突問題

【問題】Hadoop和hive-exec-3.1.2的Guava的版本衝突導致Flink任務啓動異常

【解決】刪掉%HIVE_HOME%\lib目錄下的guava-19.0.jar,再把%HADOOP_HOME%\share\hadoop\common\lib\guava-27.0-jre.jar複製到%HIVE_HOME%\lib目錄下。

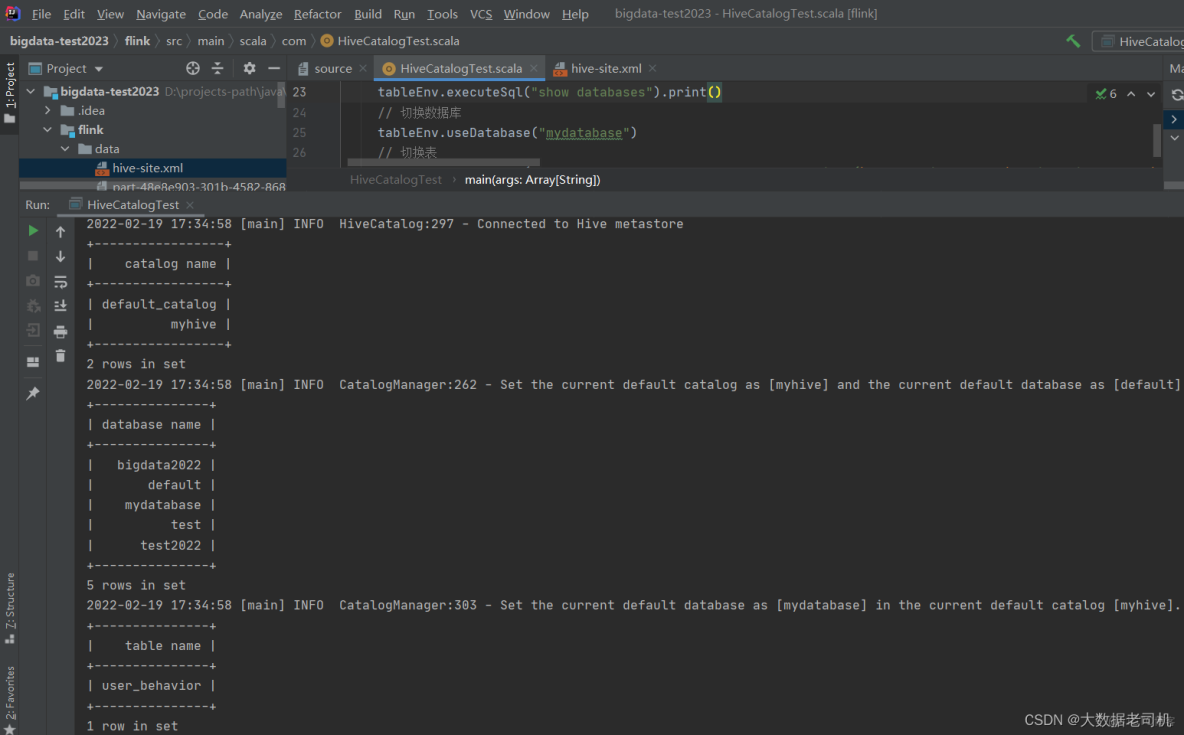

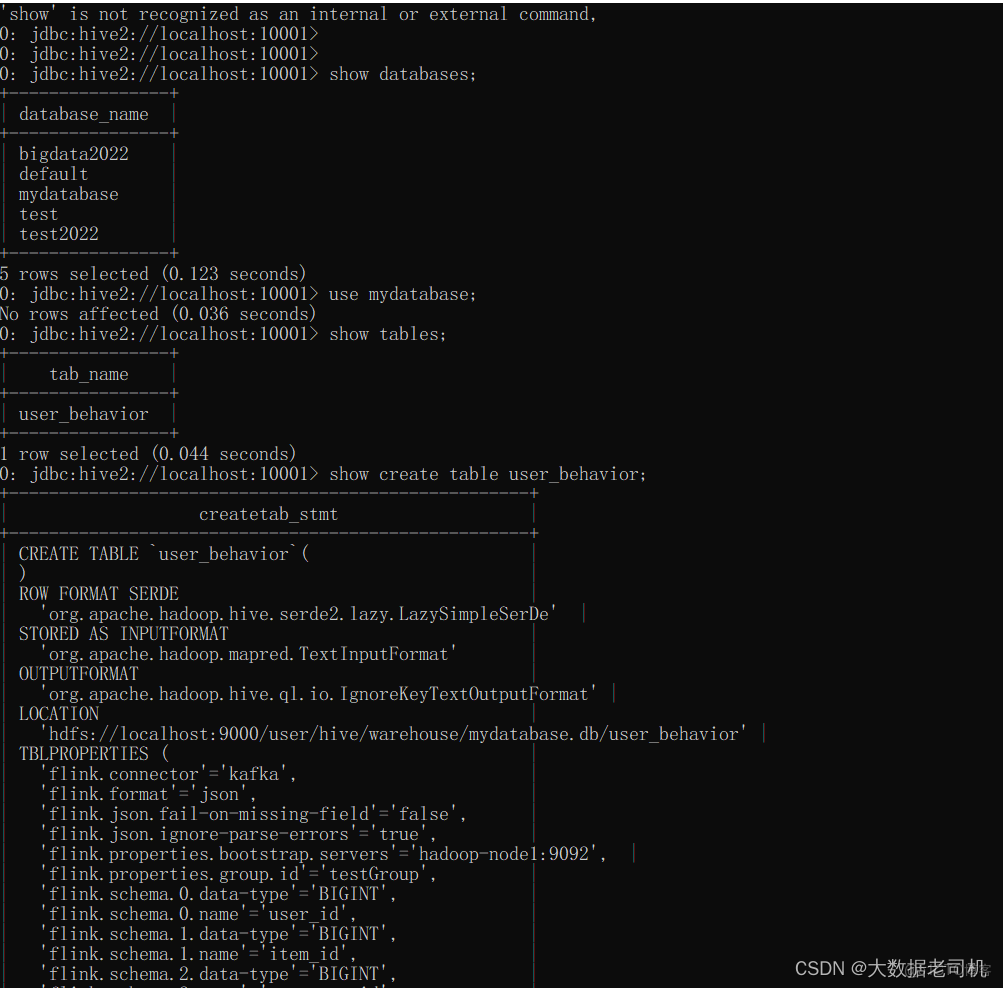

3、示例演示

package com

import org.apache.flink.table.api.{EnvironmentSettings, TableEnvironment}

import org.apache.flink.table.catalog.hive.HiveCatalog

object HiveCatalogTest {

def main(args: Array[String]): Unit = {

val settings = EnvironmentSettings.inStreamingMode()

val tableEnv = TableEnvironment.create(settings)

val name = "myhive"

val defaultDatabase = "default"

val hiveConfDir = "flink/data/"

val hive = new HiveCatalog(name, defaultDatabase, hiveConfDir)

// 註冊catalog,會話結束自動消失

tableEnv.registerCatalog("myhive", hive)

// 顯示有多少個catalog

tableEnv.executeSql("show catalogs").print()

// 切換到myhive 的catalog

tableEnv.useCatalog("myhive")

// 創建庫,已經持久化到hive了,會話結束依然存在

tableEnv.executeSql("CREATE DATABASE IF NOT EXISTS mydatabase")

// 顯示有多少個database

tableEnv.executeSql("show databases").print()

// 切換數據庫

tableEnv.useDatabase("mydatabase")

// 切換表

tableEnv.executeSql("CREATE TABLE IF NOT EXISTS user_behavior (\n user_id BIGINT,\n item_id BIGINT,\n category_id BIGINT,\n behavior STRING,\n ts TIMESTAMP(3)\n) WITH (\n 'connector' = 'kafka',\n 'topic' = 'user_behavior',\n 'properties.bootstrap.servers' = 'hadoop-node1:9092',\n 'properties.group.id' = 'testGroup',\n 'format' = 'json',\n 'json.fail-on-missing-field' = 'false',\n 'json.ignore-parse-errors' = 'true'\n)")

tableEnv.executeSql("show tables").print()

}

}看下面通過hive客户端連接查看上面程序創建的庫和表,依然是存在的

從上面驗證顯示,一切ok,記得開發的時候引入連接器的時候需要引入對應的maven配置

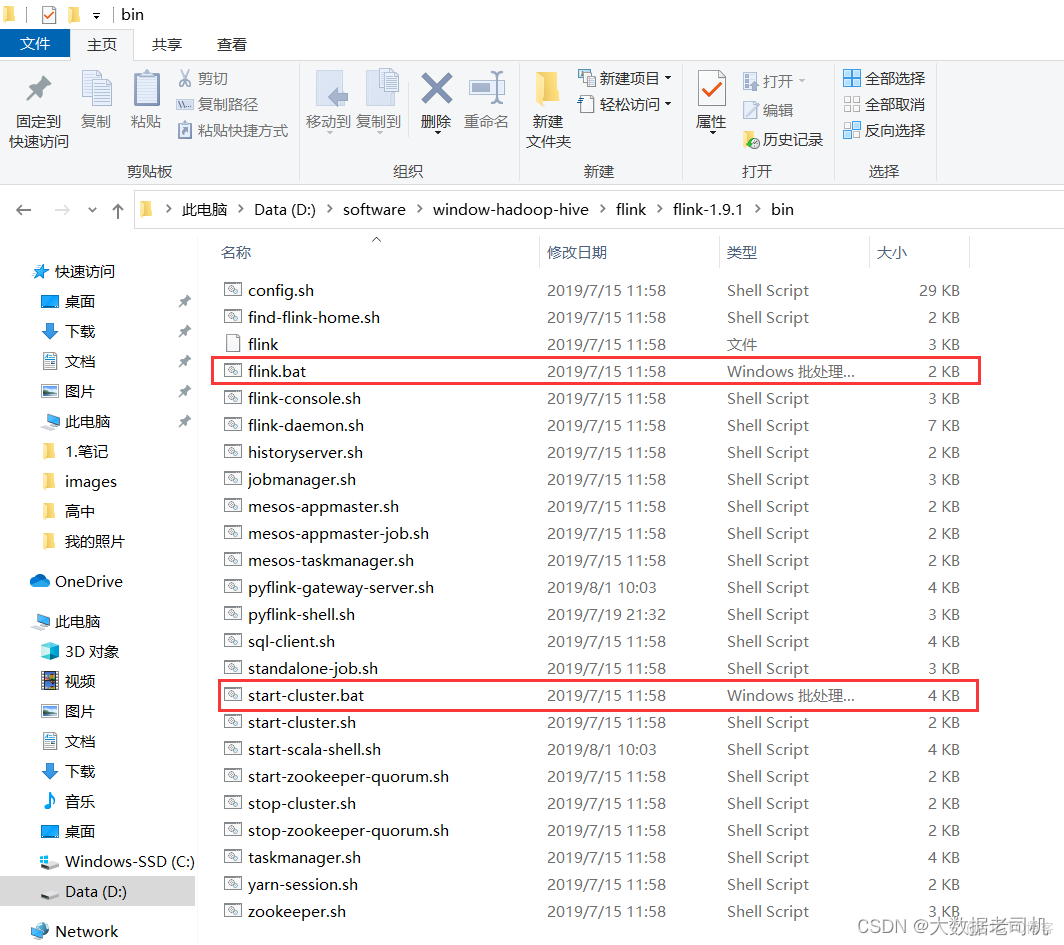

7)下載flink並本地啓動集羣(window)

下載地址:https://flink.apache.org/downloads.html

flink-1.14.3:https://dlcdn.apache.org/flink/flink-1.14.3/flink-1.14.3-bin-scala_2.12.tgz

【温馨提示】在新版中start-cluster.cmd和flink.cmd已經找不到了,但是可以從以前的版本中複製過來。下載下面的老版本

flink-1.9.1:https://archive.apache.org/dist/flink/flink-1.9.1/flink-1.9.1-bin-scala_2.11.tgz

其實主要從flink-1.9.1中copy以下兩個文件到新版本中

下載比較慢,所以我這裏還是提供一下這兩個文件

下載比較慢,所以我這裏還是提供一下這兩個文件

flink.cmd

::###############################################################################

:: Licensed to the Apache Software Foundation (ASF) under one

:: or more contributor license agreements. See the NOTICE file

:: distributed with this work for additional information

:: regarding copyright ownership. The ASF licenses this file

:: to you under the Apache License, Version 2.0 (the

:: "License"); you may not use this file except in compliance

:: with the License. You may obtain a copy of the License at

::

:: http://www.apache.org/licenses/LICENSE-2.0

::

:: Unless required by applicable law or agreed to in writing, software

:: distributed under the License is distributed on an "AS IS" BASIS,

:: WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

:: See the License for the specific language governing permissions and

:: limitations under the License.

::###############################################################################

@echo off

setlocal

SET bin=%~dp0

SET FLINK_HOME=%bin%..

SET FLINK_LIB_DIR=%FLINK_HOME%\lib

SET FLINK_PLUGINS_DIR=%FLINK_HOME%\plugins

SET JVM_ARGS=-Xmx512m

SET FLINK_JM_CLASSPATH=%FLINK_LIB_DIR%\*

java %JVM_ARGS% -cp "%FLINK_JM_CLASSPATH%"; org.apache.flink.client.cli.CliFrontend %*

endlocal

AI寫代碼

start-cluster.bat

::###############################################################################

:: Licensed to the Apache Software Foundation (ASF) under one

:: or more contributor license agreements. See the NOTICE file

:: distributed with this work for additional information

:: regarding copyright ownership. The ASF licenses this file

:: to you under the Apache License, Version 2.0 (the

:: "License"); you may not use this file except in compliance

:: with the License. You may obtain a copy of the License at

::

:: http://www.apache.org/licenses/LICENSE-2.0

::

:: Unless required by applicable law or agreed to in writing, software

:: distributed under the License is distributed on an "AS IS" BASIS,

:: WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

:: See the License for the specific language governing permissions and

:: limitations under the License.

::###############################################################################

@echo off

setlocal EnableDelayedExpansion

SET bin=%~dp0

SET FLINK_HOME=%bin%..

SET FLINK_LIB_DIR=%FLINK_HOME%\lib

SET FLINK_PLUGINS_DIR=%FLINK_HOME%\plugins

SET FLINK_CONF_DIR=%FLINK_HOME%\conf

SET FLINK_LOG_DIR=%FLINK_HOME%\log

SET JVM_ARGS=-Xms1024m -Xmx1024m

SET FLINK_CLASSPATH=%FLINK_LIB_DIR%\*

SET logname_jm=flink-%username%-jobmanager.log

SET logname_tm=flink-%username%-taskmanager.log

SET log_jm=%FLINK_LOG_DIR%\%logname_jm%

SET log_tm=%FLINK_LOG_DIR%\%logname_tm%

SET outname_jm=flink-%username%-jobmanager.out

SET outname_tm=flink-%username%-taskmanager.out

SET out_jm=%FLINK_LOG_DIR%\%outname_jm%

SET out_tm=%FLINK_LOG_DIR%\%outname_tm%

SET log_setting_jm=-Dlog.file="%log_jm%" -Dlogback.configurationFile=file:"%FLINK_CONF_DIR%/logback.xml" -Dlog4j.configuration=file:"%FLINK_CONF_DIR%/log4j.properties"

SET log_setting_tm=-Dlog.file="%log_tm%" -Dlogback.configurationFile=file:"%FLINK_CONF_DIR%/logback.xml" -Dlog4j.configuration=file:"%FLINK_CONF_DIR%/log4j.properties"

:: Log rotation (quick and dirty)

CD "%FLINK_LOG_DIR%"

for /l %%x in (5, -1, 1) do (

SET /A y = %%x+1

RENAME "%logname_jm%.%%x" "%logname_jm%.!y!" 2> nul

RENAME "%logname_tm%.%%x" "%logname_tm%.!y!" 2> nul

RENAME "%outname_jm%.%%x" "%outname_jm%.!y!" 2> nul

RENAME "%outname_tm%.%%x" "%outname_tm%.!y!" 2> nul

)

RENAME "%logname_jm%" "%logname_jm%.0" 2> nul

RENAME "%logname_tm%" "%logname_tm%.0" 2> nul

RENAME "%outname_jm%" "%outname_jm%.0" 2> nul

RENAME "%outname_tm%" "%outname_tm%.0" 2> nul

DEL "%logname_jm%.6" 2> nul

DEL "%logname_tm%.6" 2> nul

DEL "%outname_jm%.6" 2> nul

DEL "%outname_tm%.6" 2> nul

for %%X in (java.exe) do (set FOUND=%%~$PATH:X)

if not defined FOUND (

echo java.exe was not found in PATH variable

goto :eof

)

echo Starting a local cluster with one JobManager process and one TaskManager process.

echo You can terminate the processes via CTRL-C in the spawned shell windows.

echo Web interface by default on http://localhost:8081/.

start java %JVM_ARGS% %log_setting_jm% -cp "%FLINK_CLASSPATH%"; org.apache.flink.runtime.entrypoint.StandaloneSessionClusterEntrypoint --configDir "%FLINK_CONF_DIR%" > "%out_jm%" 2>&1

start java %JVM_ARGS% %log_setting_tm% -cp "%FLINK_CLASSPATH%"; org.apache.flink.runtime.taskexecutor.TaskManagerRunner --configDir "%FLINK_CONF_DIR%" > "%out_tm%" 2>&1

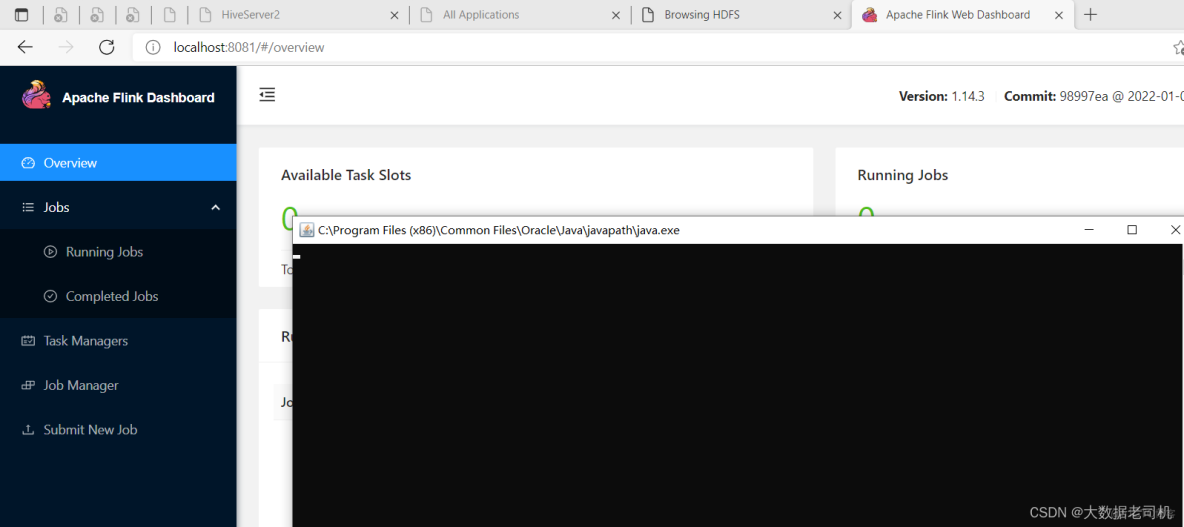

endlocal啓動flink集羣很簡單,只要雙擊start-cluster.bat

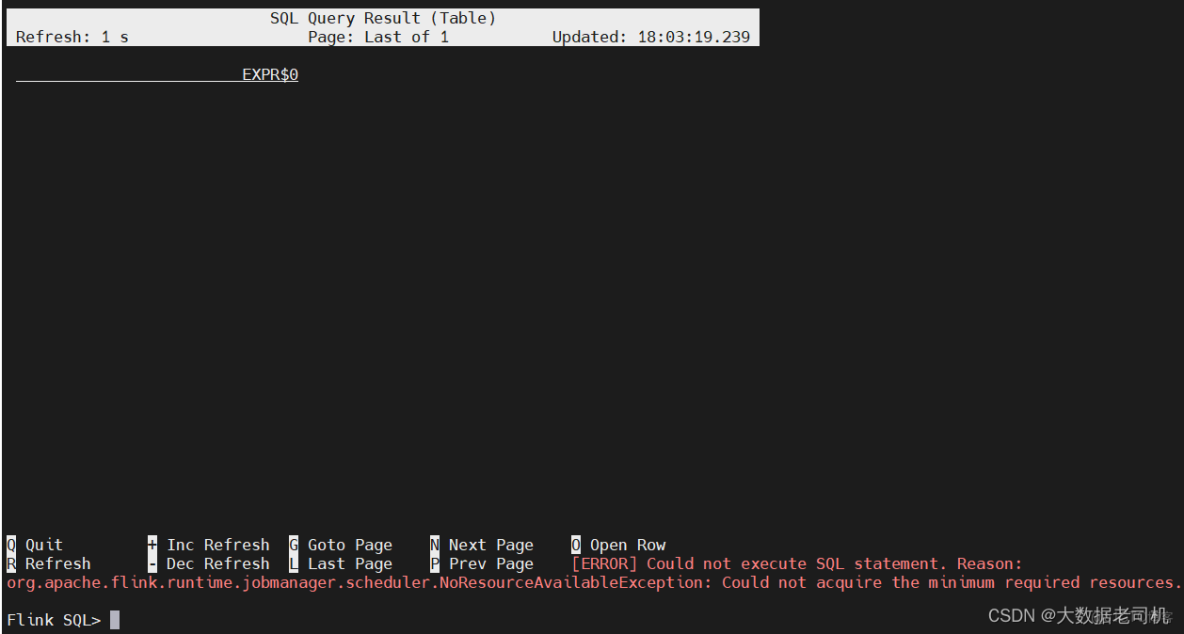

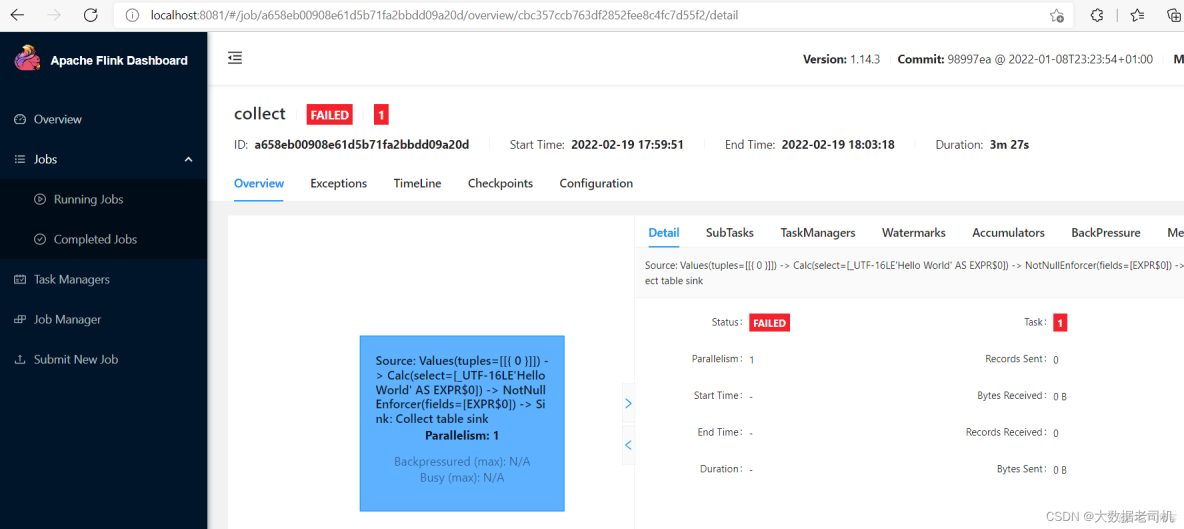

通過sql客户端驗證一下

$ SELECT 'Hello World';

AI寫代碼

bash

1

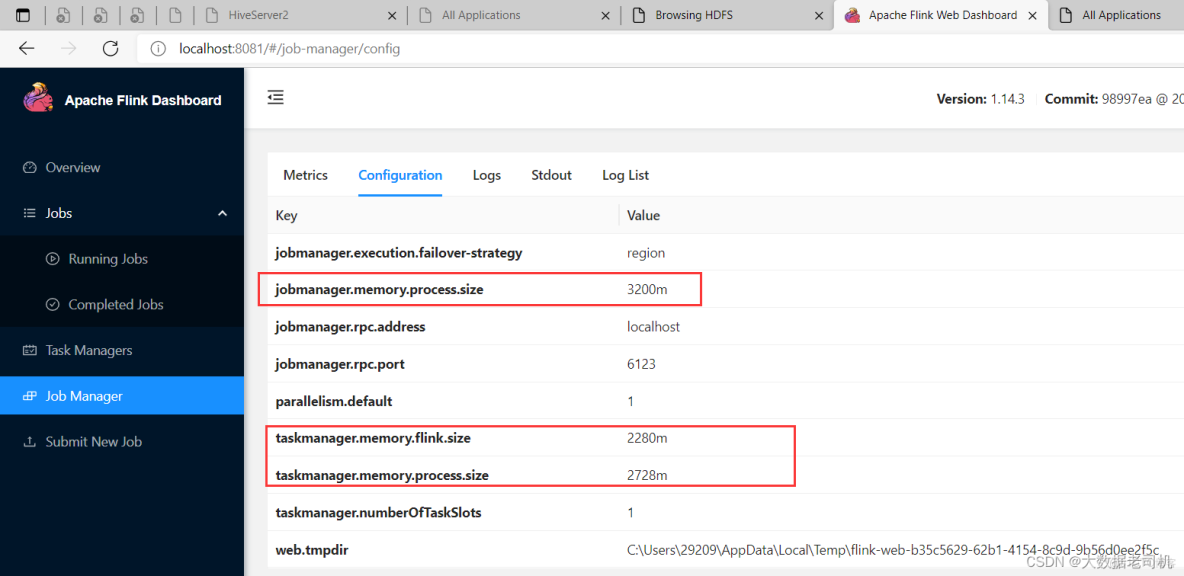

【錯誤】NoResourceAvailableException: Could not acquire the minimum required resources

【解決】是因為資源太小,不足以跑任務,擴大配置,修改如下配置:

jobmanager.memory.process.size: 3200m

taskmanager.memory.process.size: 2728m

taskmanager.memory.flink.size: 2280m

但是我這裏調大了還是太小了,自己電腦配置有限,如果有小夥伴的配置高,可以再調大驗證一下。

8)完成版配置

1、maven配置

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>bigdata-test2023</artifactId>

<groupId>com.bigdata.test2023</groupId>

<version>1.0-SNAPSHOT</version>

</parent>

<modelVersion>4.0.0</modelVersion>

<artifactId>flink</artifactId>

<!-- DataStream API maven settings begin -->

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-scala_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.12</artifactId>

<version>1.14.3</version>

</dependency>

<!-- DataStream API maven settings end -->

<!-- Table and SQL maven settings begin-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<!-- 上面已經設置過了 -->

<!--<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-common</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-csv</artifactId>

<version>1.14.3</version>

</dependency>

<!-- Table and SQL maven settings end-->

<!-- Hive Catalog maven settings begin -->

<!-- Flink Dependency -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hive_2.11</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_2.11</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<!-- Hive Dependency -->

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>3.1.2</version>

<scope>provided</scope>

</dependency>

<!-- Hive Catalog maven settings end -->

<!--hadoop start-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-common</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-jobclient</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<!--hadoop end-->

</dependencies>

</project>AI寫代碼

xml

2、log4j2.xml配置

<?xml version="1.0" encoding="UTF-8"?>

<Configuration status="WARN">

<Appenders>

<Console name="Console" target="SYSTEM_OUT">

<PatternLayout pattern="%d{YYYY-MM-dd HH:mm:ss} [%t] %-5p %c{1}:%L - %msg%n" />

</Console>

<RollingFile name="RollingFile" filename="log/test.log"

filepattern="${logPath}/%d{YYYYMMddHHmmss}-fargo.log">

<PatternLayout pattern="%d{YYYY-MM-dd HH:mm:ss} [%t] %-5p %c{1}:%L - %msg%n" />

<Policies>

<SizeBasedTriggeringPolicy size="10 MB" />

</Policies>

<DefaultRolloverStrategy max="20" />

</RollingFile>

</Appenders>

<Loggers>

<Root level="info">

<AppenderRef ref="Console" />

<AppenderRef ref="RollingFile" />

</Root>

</Loggers>

</Configuration>AI寫代碼

xml

3、hive-site.xml配置

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- 所連接的 MySQL 數據庫的地址,hive_remote2是數據庫,程序會自動創建,自定義就行 -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&useSSL=false&serverTimezone=Asia/Shanghai</value>

</property>

<!-- MySQL 驅動 -->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>MySQL JDBC driver class</description>

</property>

<!-- mysql連接用户 -->

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>user name for connecting to mysql server</description>

</property>

<!-- mysql連接密碼 -->

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password for connecting to mysql server</description>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://localhost:9083</value>

<description>IP address (or fully-qualified domain name) and port of the metastore host</description>

</property>

<!-- host -->

<property>

<name>hive.server2.thrift.bind.host</name>

<value>localhost</value>

<description>Bind host on which to run the HiveServer2 Thrift service.</description>

</property>

<!-- hs2端口 默認是1000,為了區別,我這裏不使用默認端口-->

<property>

<name>hive.server2.thrift.port</name>

<value>10001</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>true</value>

</property>

</configuration>

AI寫代碼

xml六、配置IDEA環境(java)

1)maven配置

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<parent>

<artifactId>bigdata-test2023</artifactId>

<groupId>com.bigdata.test2023</groupId>

<version>1.0-SNAPSHOT</version>

</parent>

<modelVersion>4.0.0</modelVersion>

<artifactId>flink</artifactId>

<!-- DataStream API maven settings begin -->

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.12</artifactId>

<version>1.14.3</version>

</dependency>

<!-- DataStream API maven settings end -->

<!-- Table and SQL maven settings begin-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<!-- 上面已經設置過了 -->

<!--<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.12</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-common</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-csv</artifactId>

<version>1.14.3</version>

</dependency>

<!-- Table and SQL maven settings end-->

<!-- Hive Catalog maven settings begin -->

<!-- Flink Dependency -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-hive_2.11</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-api-java-bridge_2.11</artifactId>

<version>1.14.3</version>

<scope>provided</scope>

</dependency>

<!-- Hive Dependency -->

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-exec</artifactId>

<version>3.1.2</version>

<scope>provided</scope>

</dependency>

<!-- Hive Catalog maven settings end -->

<!--hadoop start-->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-common</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-jobclient</artifactId>

<version>3.3.1</version>

<scope>provided</scope>

</dependency>

<!--hadoop end-->

</dependencies>

</project>AI寫代碼

【温馨提示】其實log4j2.xml和hive-site.xml不區分java和scala的,為了方便這裏還是再複製一份。

2)log4j2.xml配置

<?xml version="1.0" encoding="UTF-8"?>

<Configuration status="WARN">

<Appenders>

<Console name="Console" target="SYSTEM_OUT">

<PatternLayout pattern="%d{YYYY-MM-dd HH:mm:ss} [%t] %-5p %c{1}:%L - %msg%n" />

</Console>

<RollingFile name="RollingFile" filename="log/test.log"

filepattern="${logPath}/%d{YYYYMMddHHmmss}-fargo.log">

<PatternLayout pattern="%d{YYYY-MM-dd HH:mm:ss} [%t] %-5p %c{1}:%L - %msg%n" />

<Policies>

<SizeBasedTriggeringPolicy size="10 MB" />

</Policies>

<DefaultRolloverStrategy max="20" />

</RollingFile>

</Appenders>

<Loggers>

<Root level="info">

<AppenderRef ref="Console" />

<AppenderRef ref="RollingFile" />

</Root>

</Loggers>

</Configuration>

AI寫代碼xml

3)hive-site.xml配置

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<!-- 所連接的 MySQL 數據庫的地址,hive_remote2是數據庫,程序會自動創建,自定義就行 -->

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&useSSL=false&serverTimezone=Asia/Shanghai</value>

</property>

<!-- MySQL 驅動 -->

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

<description>MySQL JDBC driver class</description>

</property>

<!-- mysql連接用户 -->

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

<description>user name for connecting to mysql server</description>

</property>

<!-- mysql連接密碼 -->

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

<description>password for connecting to mysql server</description>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://localhost:9083</value>

<description>IP address (or fully-qualified domain name) and port of the metastore host</description>

</property>

<!-- host -->

<property>

<name>hive.server2.thrift.bind.host</name>

<value>localhost</value>

<description>Bind host on which to run the HiveServer2 Thrift service.</description>

</property>

<!-- hs2端口 默認是1000,為了區別,我這裏不使用默認端口-->

<property>

<name>hive.server2.thrift.port</name>

<value>10001</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>true</value>

</property>

</configuration>AI寫代碼

關於更多大數據的內容,請耐心等待~