最近由於要使用Spark做POC,在本地搭建了相應的開發環境,Spark本身是使用Scala語言編寫的,當然也可以使用java來開發spark項目,但使用scala語言來開發更加簡潔,本文在IDEA開發工具中使用Maven來創建Scala工程

1:安裝Java SDK,Scala,及IDEA 集成開發環境

JDK下載地址:http://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

Scala下載地址:http://www.scala-lang.org/download/all.html

Intellij IDEA下載地址:https://www.jetbrains.com/idea/download/download-thanks.html

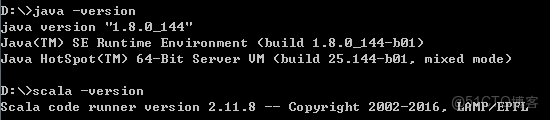

以下是我測試環境中 java 及 scala的版本:

IDEA使用的是社區版本:IDEA 2017.3.5 (Community Edition)

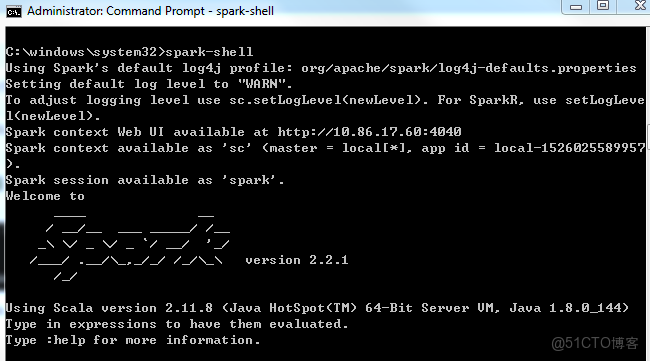

2:Spark的安裝

下載spark:https://spark.apache.org/downloads.html,選擇相應的版本,這裏選擇了2.2.1版本 spark-2.2.1-bin-hadoop2.7,並解壓到指定的目錄,添加環境變量:

SPARK_HOME:D:\Application\spark-2.2.1-bin-hadoop2.7

並添加以下路徑到Path變量中:%SPARK_HOME%\bin

這樣可以在命令行中啓動命令:spark-shell

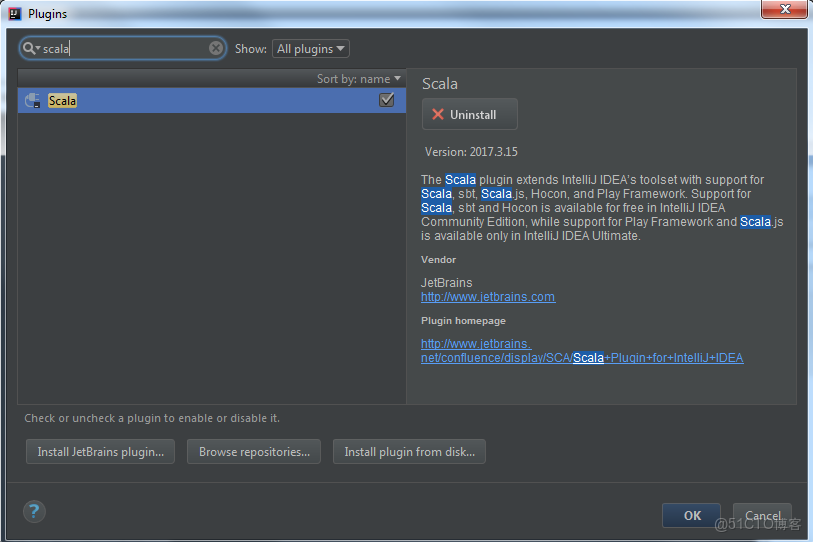

3:Scala插件的安裝

在IDEA中選擇 Configure -> Plugins,搜索scala,點擊安裝:

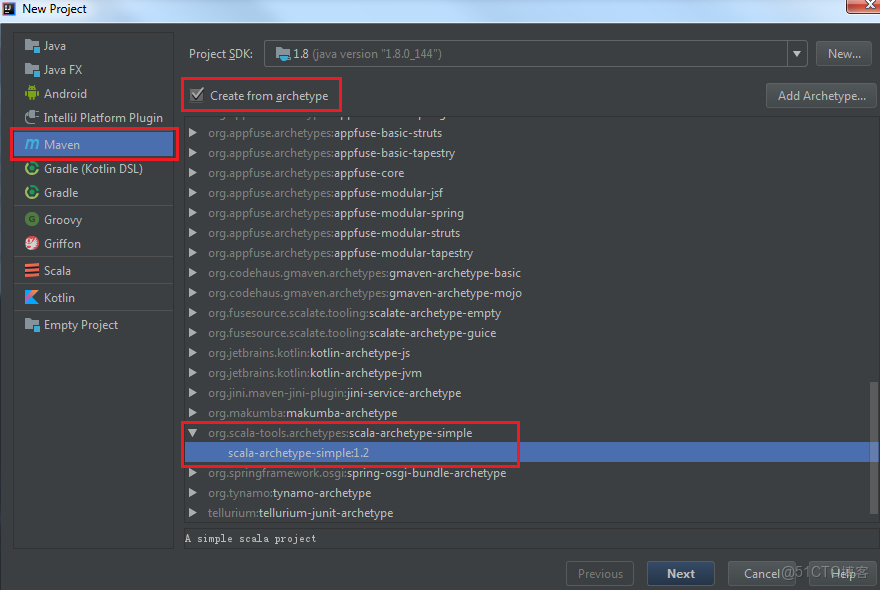

4:使用Maven來創建Scala 工程

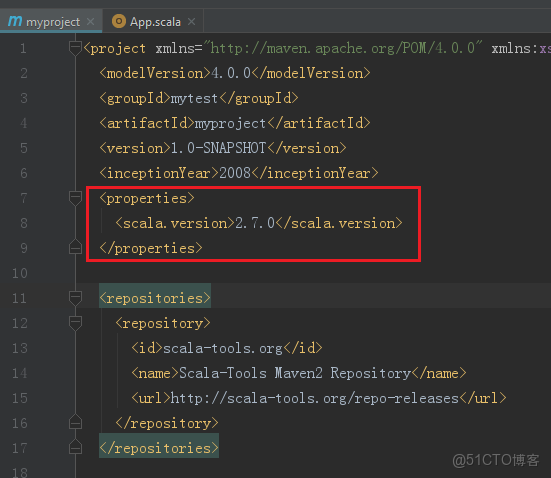

點擊 Next,設置好GroupId及ArtifactId,一路點擊到結束,期間根據實際情況可以修改Project name及代碼的路徑,最後生成Scala架構的代碼目錄及默認的pom.xml文件,在這裏需要注意一點,Scala的本機安裝的版本和Scala插件的版本不一致的問題,查看生成的默認pom.xml文件如下,發現版本使用的是2.7.0,而前面本機安裝的是2.11.8 ,

這樣創建的項目運行時會失敗,提示 "Error: Could not find or load main class ..."

查看前面安裝的Scala版本,相應的修改pom文件中的版本號為2.11.8,這就出現了另外一個問題,從Scala2.9以後,已經廢棄了Application類,而是使用了新的類App,於是修改代碼

|

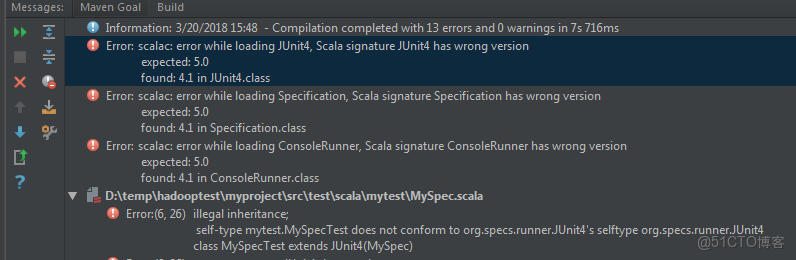

運行項目,單元測試報錯,提示scala signature 版本錯誤,先刪除MySpec.scala文件

修改pom文件,

5:添加對spark的支持,本地測試文件單詞統計

修改pom.xml

添加屬性 spark 版本,為本機安裝的spark版本

<spark.version>2.2.1</spark.version>添加依賴庫

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

</dependency>完整的pom.xml如下所示:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>mytest</groupId>

<artifactId>myproject</artifactId>

<version>1.0-SNAPSHOT</version>

<inceptionYear>2008</inceptionYear>

<properties>

<scala.version>2.11.8</scala.version>

<spark.version>2.2.1</spark.version>

</properties>

<repositories>

<repository>

<id>scala-tools.org</id>

<name>Scala-Tools Maven2 Repository</name>

<url>http://scala-tools.org/repo-releases</url>

</repository>

</repositories>

<pluginRepositories>

<pluginRepository>

<id>scala-tools.org</id>

<name>Scala-Tools Maven2 Repository</name>

<url>http://scala-tools.org/repo-releases</url>

</pluginRepository>

</pluginRepositories>

<dependencies>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.4</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.specs</groupId>

<artifactId>specs</artifactId>

<version>1.2.5</version>

<scope>test</scope>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<executions>

<execution>

<goals>

<goal>compile</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

<args>

<arg>-target:jvm-1.5</arg>

</args>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-eclipse-plugin</artifactId>

<configuration>

<downloadSources>true</downloadSources>

<buildcommands>

<buildcommand>ch.epfl.lamp.sdt.core.scalabuilder</buildcommand>

</buildcommands>

<additionalProjectnatures>

<projectnature>ch.epfl.lamp.sdt.core.scalanature</projectnature>

</additionalProjectnatures>

<classpathContainers>

<classpathContainer>org.eclipse.jdt.launching.JRE_CONTAINER</classpathContainer>

<classpathContainer>ch.epfl.lamp.sdt.launching.SCALA_CONTAINER</classpathContainer>

</classpathContainers>

</configuration>

</plugin>

</plugins>

</build>

<reporting>

<plugins>

<plugin>

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<configuration>

<scalaVersion>${scala.version}</scalaVersion>

</configuration>

</plugin>

</plugins>

</reporting>

</project>

修改scala代碼,引入spark庫:

package mytest

import org.apache.spark._

object wordcount {

def main(args: Array[String]) {

var masterUrl = "local[1]"

var inputPath = "D:\\temp\\mytext.txt"

var outputPath = "D:\\temp\\output"

println(s"masterUrl:${masterUrl}, inputPath: ${inputPath}, outputPath: ${outputPath}")

val sparkConf = new SparkConf().setMaster(masterUrl).setAppName("WordCount")

val sc = new SparkContext(sparkConf)

val rowRdd = sc.textFile(inputPath)

val resultRdd = rowRdd.flatMap(line => line.split("\\s+"))

.map(word => (word, 1)).reduceByKey(_ + _)

resultRdd.saveAsTextFile(outputPath)

}

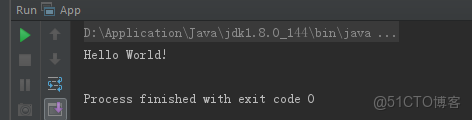

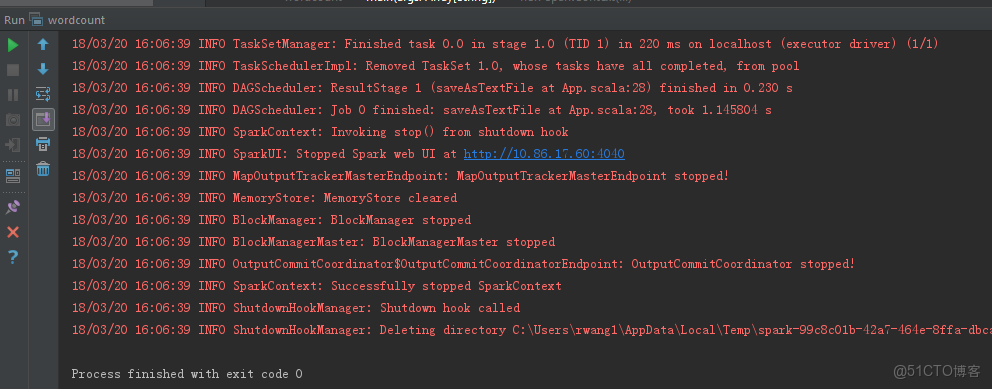

}再次運行工程,可以在窗口中看到已經成功運行spark,對輸入的文本進行詞彙統計,此時,spark運行的是本地模式,使用的是本地文件作為輸入

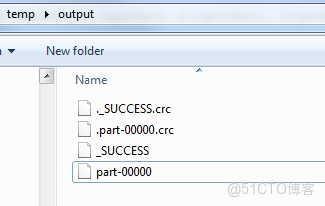

在輸出目錄,可以看到程序運行的結果